Fit for machine learning#

Data Transformation#

For raw data to become effective input for machine learning models, it must undergo a critical transformation process. This process typically includes several key steps: data cleaning, preprocessing, and feature engineering.

Data cleaning involves removing errors, inconsistencies, and irrelevant information to ensure the dataset is accurate and reliable. Preprocessing follows, where data is normalized or standardized to bring values onto a consistent scale, making it easier for algorithms to interpret and analyze. Additionally, feature engineering plays a crucial role by creating new features or modifying existing ones to better capture the underlying patterns in the data. This step can involve deriving new variables, aggregating data, or encoding categorical variables.

These transformations are essential because machine learning algorithms often perform best when they operate on structured, clean, and relevant data. Raw data, in its unprocessed form, can be noisy, inconsistent, and may not highlight the patterns or relationships needed for effective learning. By refining raw data through these processes, we improve the model's ability to learn meaningful patterns, leading to more accurate predictions and classifications. Thus, the transformation of data is a fundamental step in preparing it for effective use in machine learning applications, setting the stage for successful model training and deployment.

Normalization#

Normalizing feature data before feeding it into a neural network is essential for several reasons. Firstly, normalization helps to bring all features to a similar scale, preventing certain features from dominating the learning process simply because they have larger ranges of values. This ensures that the neural network can learn from each feature equally and effectively. Secondly, it accelerates the convergence of gradient descent during training, as normalized data leads to a more spherical loss function with a well-defined minimum, making optimization more efficient. Lastly, normalization enhances the generalization capabilities of the neural network by improving its ability to handle unseen data points and reducing the likelihood of overfitting. Overall, normalizing feature data is a critical preprocessing step that contributes significantly to the neural network's performance and stability.

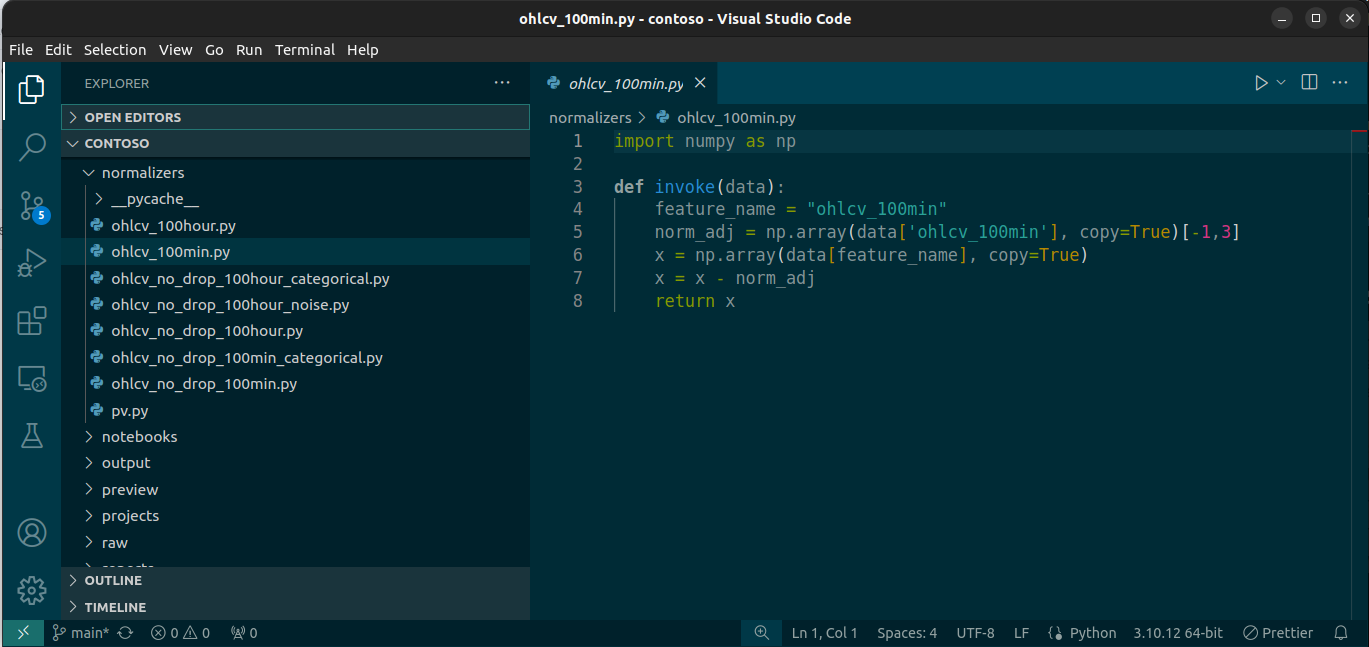

As with everything else in LIT, this too is easily extensible via Python scripts. With this platform users have the flexibility to write and implement custom normalizers per feature, which get implemented consistently for both historical and real-time streaming data.

The Same-Code-Path Guarantee#

Most ML platforms struggle with train/serve skew—subtle differences between how features are computed during training versus production inference. These differences cause models to underperform in production despite excellent training metrics. Teams spend enormous effort maintaining parallel codebases and hoping they stay synchronized.

LIT eliminates this problem by design: training and inference use literally the same feature functions.

# This exact call happens during both build AND prediction:

sample = feature(adapter, index, feature.vars, feature_cache)

When you build training data, the platform calls your feature functions. When you deploy a model for inference, the platform calls the same feature functions with the same parameters. There is no separate "inference pipeline" to maintain.

Why This Matters#

No Drift: Feature logic can't diverge because there's only one implementation.

Instant Debugging: If a prediction seems wrong, you can trace exactly what the model saw—the same code that built training data computed the inference input.

Confident Deployment: Models behave in production exactly as they did during evaluation. The features that achieved 0.85 AUC in testing will compute identically in production.

Simplified Maintenance: Update a feature once, and both training and inference automatically use the new logic.

This isn't achieved through careful discipline or code reviews—it's architecturally enforced. The platform doesn't have separate paths that could diverge.