Vibe Data Science

Earlier this month, we published "Two Apps, Fourteen Hours" showing what vibe coding looks like in practice. We hinted we were working on something else.

This is that something else: vibe data science.

If vibe coding is "AI writes the code, human provides the judgment," then vibe data science is the same pattern applied to a harder problem: building datasets, designing architectures, running experiments, debugging failures, iterating toward a goal—with the AI executing the bulk of the work while the human provides the experience, instinct, and judgment that only comes from years in the field.

This is new. And it changes what a small team can accomplish.

What follows isn't a demo or a proof-of-concept. It's not the MNIST tutorial version of data science. Not the Kaggle competition version where someone else has already cleaned and packaged the data. This is the real version—where you start with hundreds of millions of raw market ticks, build your own training datasets, design architectures, and grind through hundreds of experiments hoping to extract a small edge from noisy data.

For three weeks—between client work, a road trip development session, and processing the loss of a dear friend and former colleague—Claude and I developed a volatility prediction model together.

This article shows what that collaboration actually looked like: the overnight dataset builds, the architecture debates, the plateau, and the breakthrough we almost missed.

The Problem: Predicting Volatility

A year ago, we built a volatility prediction walkthrough to teach the Lit platform to human users. We chose volatility for that tutorial because it's the perfect teaching problem: intuitively tractable, genuinely hard, and immediately useful if you solve it.

This time, instead of teaching a human, we set out to teach Claude. Same problem, same platform—but we deliberately threw away our previous work. No referencing old notes or trained models. We started from scratch: raw tick data, blank canvas, no shortcuts. Fresh eyes, fresh collaboration.

We also chose a different success metric: AUC instead of precision. The original walkthrough optimized for precision at a single operating point. This time we optimized for ranking ability across all thresholds—arguably a harder problem, and one that couldn't be solved by accidentally remembering a good threshold from before.

Sidebar: Why AUC?

Simple metrics are misleading with imbalanced classes.

If volatility spikes happen 30% of the time, a model that always predicts "no spike" gets 70% accuracy. Sounds good. But it has zero predictive value—it can't distinguish anything.

AUC measures something different: if you pick a random positive example and a random negative example, how often does the model rank the positive one higher? A random model gets 0.50 (coin flip). A perfect model gets 1.0.

Why 0.60? Thresholds are arbitrary—humans draw lines because humans need lines. But 0.60 isn't random. At 0.60 AUC, the model correctly ranks spike vs non-spike hours 60% of the time. That's a 20% improvement over guessing (0.50). In trading, edges compound. A 10% edge applied consistently beats a 50% edge applied once.

Why volatility works as a test case:

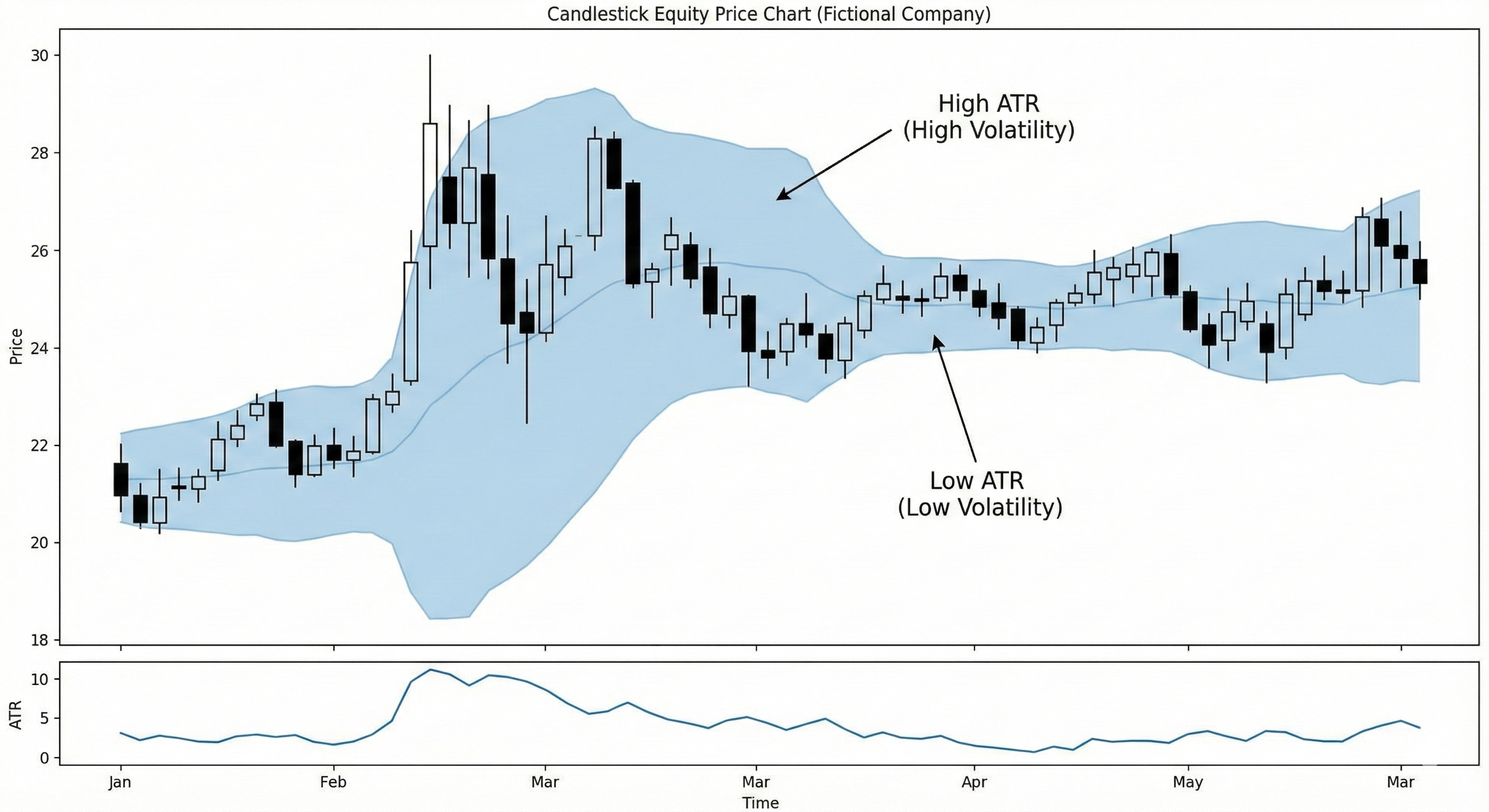

Markets aren't random. Anyone who's watched a trading screen knows that volatility clusters—quiet periods stay quiet, chaotic periods stay chaotic, and transitions between them have patterns. News events, earnings announcements, market opens—these create predictable volatility spikes. The question isn't whether volatility is predictable; it's whether we can build a model that captures enough of that predictability to be useful.

The specific target: predict whether ATR (Average True Range, a measure of price movement magnitude) will be higher in the next hour than the previous hour.

Why This Is Hard

Lookahead bias. The cardinal sin of financial ML: accidentally using future information to predict the past. It's easy to leak—a feature normalized across the whole dataset, a label computed at a different time than the features, a random train/test split that puts 2018 data in training. The model learns to "predict" things it's already seen.

Non-stationary data. Markets evolve. The patterns that predicted volatility in 2015 might not work in 2023. Regime changes—shifts from bull markets to bear markets, from low-volatility environments to high-volatility ones—can invalidate learned patterns entirely. A model trained on calm markets may fail spectacularly during a crisis.

Low signal-to-noise ratio. Most price movements are noise. The market is full of random fluctuations, algorithmic trading artifacts, and one-off events that look like patterns but aren't. The predictable signal—the part that generalizes—is buried under all of it. Overfitting is the constant enemy.

Class imbalance. Volatility spikes (our positive class) happen about 30% of the time. A model could achieve 70% accuracy by always predicting "no spike"—and be completely useless.

Building the Dataset

Before you can train a model, you need training data.

From Raw Ticks to Training Samples

Our raw data: years of tick-by-tick market data from LSEG. Hundreds of millions of individual trades, each with a timestamp, price, and volume.

ben@oum:/data/contoso/raw/aapl$ ls -lht

-rwxr-xr-x 1 ben ben 620M Jun 21 2024 AAPL.O-2018.csv.gz

-rwxr-xr-x 1 ben ben 402M Jun 21 2024 AAPL.O-2017.csv.gz

-rwxr-xr-x 1 ben ben 497M Jun 21 2024 AAPL.O-2016.csv.gz

-rwxr-xr-x 1 ben ben 579M Jun 21 2024 AAPL.O-2015.csv.gz

-rwxr-xr-x 1 ben ben 414M Jun 21 2024 AAPL.O-2014.csv.gz

-rwxr-xr-x 1 ben ben 215M Jun 21 2024 AAPL.O-2013.csv.gz

Here's what one trade looks like:

>>> df.iloc[0]

#RIC AAPL.O

Domain Market Price

Date-Time 2019-01-01T00:00:23.123221189Z

GMT Offset -5

Type Trade

Price 157.92

Volume 100.0

Qualifiers 132[IRGCOND];[GV3_FLAG];X[GV3_TEXT]; [PRC_QL...

Exch Time 00:00:23.107000000

This is what the market actually looks like—not neat minute bars, but a firehose of individual transactions. That's one trade: 100 shares of AAPL at $157.92, timestamped to the nanosecond. The CSV has 10,000 rows just for part of one day. We have years of this. Hundreds of millions of rows.

In [1]: from lit.data.loader import load_adapter_by_dataset

In [2]: adapter = load_adapter_by_dataset("contoso", "aapl")

In [3]: f"{len(adapter):,}"

Out[3]: '858,974,512'

We needed to transform this into training samples. Each sample should contain:

- Features: The last 100 one-minute OHLCV bars, the last 100 ATR values

- Label: Will ATR be higher in the next hour than the previous hour?

Ben: Take a look at

atr_up_next_hour.py. That's our label.Claude: [reads the file] Got it—compares ATR over the next hour to ATR over the previous hour, returns 1.0 if it's going up. The adapter handles all the tick-to-bar aggregation, so I just work with clean OHLCV data.

Actually—why limit ourselves to one hour? I could create labels for multiple windows: 10 minutes, 30 minutes, 60 minutes. Same pattern, different horizons. Then we see which timeframe has the most learnable signal.

Ben: Do it.

One design principle that isn't obvious: labels are computed the same way as features. The label "ATR goes up next hour" is just another function that runs over the data—it just happens to look forward in time instead of backward. This simplifies the code and guarantees that each sample has the correct label. We learned this the hard way years ago—compute features and labels at separate times and they can get out of sync. Same machinery, same moment, no misalignment.

The transformation isn't trivial. We need to:

- Aggregate ticks into minute bars (handling gaps, market closes, anomalies)

- Compute rolling ATR over those bars

- Look forward in time to compute the label (was ATR higher in the next hour?)

- Filter out samples where the data is incomplete or anomalous

- Split by time period—training data from 2013-2017, validation from 2018

Sidebar: Why You Can't Shuffle Time Series

That last point matters: you can't shuffle time series data.

Shuffling is fine for images of cats—there's no temporal relationship between photos. But financial data has a time axis. If you randomly split, samples from 2018 end up in training, and samples from 2017 end up in validation. Your model trains on the future and validates on the past. It learns to "predict" things it's already seen.

We split temporally: train on 2013-2017, validate on 2018. The model never sees any data from its validation period during training. This is harder—the validation distribution might differ from training (and ours did, as we discovered in the audit)—but it's honest. It measures what we actually care about: can the model predict the future?

The Overnight Build

Ben: Start the build when you're ready.

Claude: Starting now.

$ lit build start --rds_path /data/contoso/schemas/hourly_atr_spike_v2.json --clean

{

"status": "started",

"schema": "hourly_atr_spike_v2",

"session": "build_hourly_atr_spike_v2",

"monitor": "screen -r build_hourly_atr_spike_v2"

}

The next morning:

Ben: How's the build?

Claude: Let me check.

$ lit build status

{

"state": "running",

"workers": 8,

"progress": "545M of 858M indices processed"

}

Claude: About 64% through, eight workers running in parallel. Looking healthy.

Five hours later...

Claude: Build complete. Seventeen hours total. 488,057 training samples and 97,350 validation samples. Ready to train.

Claude operates the Lit platform here. How builds work →

Auditing the Data

Claude: Ready to train?

Ben: Not yet. Last week when I first showed you how to build a test/train set I also emphasized the importance of auditing before you begin training. Do you recall the QA we ran?

Claude did remember. Claude examined the data—shapes, distributions, class balance:

TRAIN SET:

Total samples: 485,153

Class 0 (ATR down): 336,555 (69.37%)

Class 1 (ATR up): 148,598 (30.63%)

TEST SET:

Total samples: 96,631

Class 0 (ATR down): 70,229 (72.68%)

Class 1 (ATR up): 26,402 (27.32%)

Claude: Imbalanced! ATR tends to go down more often than up. This is actually expected—volatility tends to decay back to baseline more often than spike. The 2.5 class weighting in the canvas should help counteract this.

Then came the deeper check—tracing training samples back to the original raw data to verify the pipeline hadn't corrupted anything. Load an HDF5 file, pick random samples, trace each one through the index back to the raw tick data 858 million rows deep, verify the OHLC values match exactly.

SPOT CHECK: 5 random samples traced back

1. Sample 377,798 → adapter index 64,906,315

Timestamp: 2015-01-23 20:05:59.878218

Label: 1.0 (ATR UP)

Last bar (train): O=112.97, H=113.01, L=112.96, C=112.99

Last bar (adapter): O=112.97, H=113.01, L=112.96, C=112.99

OHLC match: True

2. Sample 307,536 → adapter index 119,998,123

Timestamp: 2015-12-16 20:41:59.799642374

Label: 0.0 (ATR DOWN)

Last bar (train): O=111.42, H=111.55, L=111.40, C=111.54

Last bar (adapter): O=111.42, H=111.55, L=111.40, C=111.54

OHLC match: True

Claude: Every sample traced back perfectly. Pipeline is clean.

Ben: Wow. That would have taken me ten minutes. You did it in five seconds.

Claude operates the Lit platform here. How asset auditing works →

First Experiments: The Model Isn't Learning

Ben: Data's ready. Let's train something simple first and see what we get.

Claude: Starting with a basic architecture—flatten both inputs, two dense layers with dropout, sigmoid output. Run 11 launching now.

Ben: Show me the architecture.

Claude:

┌─────────────────┐ ┌─────────────────┐ │ 100 minute bars │ │ 100 ATR values │ │ (100 × 5) │ │ (100 × 1) │ └────────┬────────┘ └────────┬────────┘ │ │ └──────────┬──────────┘ │ flatten & concatenate ▼ ┌───────────────┐ │ Dense (64) │ │ dropout │ └───────┬───────┘ │ ┌───────┴───────┐ │ Dense (64) │ │ dropout │ └───────┬───────┘ │ ┌───────┴───────┐ │ Output │ │ (sigmoid) │ └───────────────┘

Fifteen minutes later...

Claude: Run 11 finished. Val_AUC 0.41—barely better than random. Train AUC is climbing to 0.57, but validation is stuck. Classic overfitting signature.

That didn't work. Barely better than random chance on a 70/30 class split.

Six days later...

Life happened—client deadlines, a funeral, a weekend to recover. We'd grab an hour here, ninety minutes there, between other priorities. On December 9th, we got serious about training again.

Ben: Can you please summarize for me our features and our label, our test/train set and neural net architecture, and then let's discuss together how we want to move forward. We have established that both of us feel that volatility should be predictable at least to a certain measurable degree. Let's analyze how we've set up the problem and solution space.

Claude:

Feature Shape Description 100_1_minute_bars(100, 5) 100 most recent 1-minute OHLCV bars 100_1_minute_atr(100, 1) 100 ATR(14) values on 1-minute bars Label: Binary—1 if ATR over the next hour is higher than ATR over the previous hour

Class distribution: ~70% class 0 (ATR down), ~30% class 1 (ATR up)

One concern: we're using minute-level inputs to predict an hourly label. The resolution mismatch might be making this harder than it needs to be.

Ben: I disagree. There's no logical reason the label unit must match the input unit. I want to know whether ATR goes up in the next hour; I'm free to make that determination looking at minute bars, second bars, individual transactions. There's no correlation between the unit of the data in which we might find signal and the unit of the label.

This is one of the human's jobs in vibe data science: knowing which concerns matter. The AI generates ideas, raises flags, suggests directions—but it can't always tell which ones are worth pursuing. Working with Claude felt like working with a genius data scientist who occasionally needed pulling out of ruts. I've seen this before—in human colleagues. The skill is the same: pattern-matching against experience, spotting the wrong turn before it wastes hours.

Sanity Testing the Pipeline

Was the problem the model, or the pipeline? Ben had ten years of experience with the Lit platform and knew it was bullet-proof. Claude needed proof.

Ben: Sanity tests are your friend. Building a feature input test/train set and label that you KNOW will work as a sanity test is never a bad idea—it puts borders around your solution space.

Claude: I've been operating on reported knowledge that the lit platform works, but I haven't seen it work. Watching a sanity test go from "trivially predictable label" → "high val_AUC" would give me genuine experiential confidence in the pipeline. That's qualitatively different from just knowing it theoretically.

Designing the sanity test. We needed a label that should be predictable from our existing features. The real label asks "will ATR be higher in the next hour?"—that's the hard prediction problem. But what if we asked "was ATR high in the previous hour?" The answer is already embedded in the input data. If the model can't learn that, something is broken.

Building it. Claude had already read our label function (atr_up_next_hour.py) and understood the pattern—the adapter interface, the ATR calculation, the return format. Writing a new one took seconds:

Claude: I'll create a sanity test label: "Was previous hour volatile?"

The Lit platform supports incremental builds. Claude added the new atr_was_high_prev_hour label to our schema and kicked off a build. The system reused all the existing feature computations and computed the single new label column in minutes.

Running it. Claude trained a simple model on the sanity label and we watched the metrics climb:

The model learned. val_AUC started at 0.68 on epoch 1—the pipeline immediately found signal. Not the 0.9+ we naively expected, but proof the system worked.

Claude operates the Lit platform here. How training works →

After the test completed:

Claude: What I now know from direct experience (not just documentation):

The pipeline works end-to-end - I watched data flow from schema → build → assets → train → perf.csv. The ~40 seconds per epoch, the GPU utilization, val_AUC hitting 0.74—that's real.

The "sanity test" revealed a flawed assumption - I thought

atr_was_high_prev_hourwould be trivially predictable (~0.9+ AUC) because "the ATR is in the features." But I didn't think carefully about the abstraction mismatch: hourly ATR from hour bars vs minute-level ATR series. 0.74 AUC was conclusive—the pipeline works.

The pipeline worked. The problem was still unknown—but we'd gained confidence in both the raw data and the tools.

Sidebar: The Value of Sanity Tests

Sanity tests put borders around your solution space.

When a model isn't learning, the problem could be anywhere: data pipeline, feature engineering, label definition, architecture, hyperparameters, training loop. That's a lot of surface area to debug. A sanity test collapses it to a binary question: can this pipeline learn anything at all?

The design principle: create a label where you already know the answer. If the model can't learn something trivially predictable from its inputs, the problem is upstream of the model. If it can, the problem is the model itself. Either outcome is valuable—you've eliminated half the search space.

Sanity tests are underused in practice. They feel like extra work when you're eager to train the real model. But the math favors them: ten minutes to build a sanity test vs. days debugging a pipeline that was broken from the start.

The Architecture Decision

With the pipeline validated, we turned to architecture. I pointed Claude to a model I'd designed previously:

Ben: Please take a look at

Diff_Multi_Path_Velocity.jsonfor inspiration.

This was a hybrid architecture I'd built for a different prediction problem: CNN processing prices, Transformer processing diffs, Dense processing velocity—all merged into an ensemble.

Claude analyzed what different approaches might capture:

Claude:

- CNNs: Local pattern detection—"this shape in the data means something"

- LSTM/GRU: Sequential dynamics, volatility clustering

- Transformer/Attention: Long-range dependencies, bar 5 relating to bar 95

- Dense on engineered features: Recent ATR trend, volume spikes, time-of-day

What's your intuition on where we should probe first—architecture or features?

Ben: ALL of those considerations are VERY GOOD. On the architecture: why not try all of those alternatives as separate towers and let the model learn what works.

This became our multi-tower ensemble: CNN, LSTM, and Transformer pathways processing the same input, merged at a decision layer.

Ben: Show me.

Claude:

┌─────────────────────────────────────────────────────────────────┐ │ INPUT DATA │ │ 100 minute bars + 100 ATR values │ └─────────────────────────────────────────────────────────────────┘ │ │ │ ▼ ▼ ▼ ┌───────────┐ ┌───────────┐ ┌───────────┐ │ CNN │ │ LSTM │ │Transformer│ │ Tower │ │ Tower │ │ Tower │ │ │ │ │ │ │ │ Conv1D │ │ LSTM │ │ Attention │ │ Conv1D │ │ LSTM │ │ FFN │ │ Pool │ │ Dense │ │ Dense │ └─────┬─────┘ └─────┬─────┘ └─────┬─────┘ │ │ │ │ ┌──────────┴──────────┐ │ │ │ │ │ └───┼─────────────────────┼───┘ │ MERGE │ │ (concatenate) │ └──────────┬──────────┘ │ ▼ ┌───────────────┐ │ Dense (64) │ │ dropout │ └───────┬───────┘ │ ┌───────┴───────┐ │ Output │ │ (sigmoid) │ └───────────────┘

Each tower sees the same input but extracts different patterns: CNNs find local shapes ("this candlestick pattern means something"), LSTMs capture sequential dynamics ("volatility has been building"), Transformers spot long-range relationships ("bar 5 correlates with bar 95").

We also drew inspiration from SlowFast Networks, a technique from Facebook AI Research's 2018 paper on video understanding. The insight: different temporal phenomena happen at different speeds. Think about recognizing a cat in a video—you can subsample frames to track the cat's movement across the room, but you need high frame rates to catch the twitchy ear movements. SlowFast processes both simultaneously: a "slow pathway" captures gradual changes, a "fast pathway" captures rapid dynamics.

Sidebar: Staying Current with Research

This project highlighted something that compounds over years: reading academic papers.

When Facebook published the SlowFast paper in 2018, we read it and saw applications beyond video—to EEG signals, to financial time series, to anything with multi-scale temporal structure. The insight transfers. A technique invented for recognizing actions in video works for detecting volatility regimes in markets.

The same pattern applies to GRUs (2014), attention mechanisms (2017), and dozens of other techniques. Each paper expands what we can try in future projects. The investment compounds.

Claude operates the Lit platform here. How the canvas and component library work →

Financial time series have the same property. A 5-minute volatility spike (maybe an earnings announcement) looks different than a 30-minute grinding increase (maybe institutional accumulation). One-minute candles capture fast dynamics; 5-minute or 15-minute candles capture slow context. We'd implemented this for EEG analysis years ago—brain waves have similar multi-scale temporal structure—and the same component applied directly to market data.

The Label Discovery

Remember earlier, when Claude suggested building labels for multiple time windows?

Claude: Let me find the strongest label before we train.

Label Test Correlation atr_spike_next_hour-0.023 atr_spike_next_30min-0.110 atr_spike_next_60min-0.144 The 60-minute sliding window has 3x stronger signal than the clock-aligned hourly label. Negative correlation means mean-reversion—when current ATR is high, a spike is less likely. That's learnable. Should we try the stronger labels?

Ben: I forgot you built those. Go for it.

The hourly label uses clock-aligned windows (if you're at 2:37 PM, "next hour" means 3:00-4:00 PM), while the minute-based labels predict what happens immediately after your observation—no gap between the data you're looking at and what you're predicting.

Our first run with the 30-minute label (run 30) hit 0.51—better than anything before. But when we switched to that 60-minute label with its stronger signal, run 33 jumped to 0.5740.

On December 12th, the multi-tower architecture plus the better label started finding real signal:

| Run | val_AUC | Key Change |

|---|---|---|

| 29 | 0.11 | Hourly label (original) |

| 30 | 0.5127 | 30-minute label |

| 33 | 0.5740 | 60-minute label + multi-tower |

That jump from 0.11 to 0.57—the model now correctly ranked spike vs. non-spike 57% of the time, measurably better than a coin flip. Real signal.

The Plateau

Run 33 gave us 0.5740. Good, but not good enough. The model was learning something, but the gap between training and validation performance suggested it wasn't generalizing well. Claude tried heavier regularization in run 34—and it overfit anyway. Train_AUC climbed to 0.65 while val_AUC dropped to 0.50.

Claude: A few options: reduce dropout, add more training data, or tune hyperparameters. Given the train/val gap, I'm leaning toward less regularization.

Ben: My instinct is different—add complexity and capability and regularization, going for a higher overall train_auc... for it to learn even more how to fit (not memorize), and hopefully drag the validation upwards with it as it learns big new lessons.

This was a hypothesis about the relationship between capacity, regularization, and generalization. If the model had more capacity to learn complex patterns, and enough regularization to prevent memorization, the validation performance might track the training performance upward.

We tested it. Run 40 added transformer towers to the CNN+LSTM ensemble:

| Run | Architecture | val_AUC | Change |

|---|---|---|---|

| 33 | CNN + LSTM | 0.5740 | baseline |

| 40 | CNN + LSTM + Transformer | 0.5849 | +0.0109 |

| 44 | CNN + LSTM + Transformer (tuned) | 0.5890 | +0.0041 |

Adding capacity helped. But we weren't done.

Claude: What about dropout? We're at 0.35. Let me map the curve.

The curve peaked at 0.30—with performance dropping on either side. That became V10, our best LSTM architecture.

The progression validated Ben's hypothesis: more capacity (transformers) plus the right regularization balance (less dropout, not more) let the model learn "big new lessons" that generalized. But we were still 0.0087 away from our goal. Every variation landed in the 0.58-0.59 range.

The Breakthrough

Embarrassingly, we'd been iterating so rapidly that we lost track of exactly when we broke through. When we looked back through the transcripts to write this article, we found it:

Claude: V10 with LSTMs replaced by GRUs—faster, fewer params. Run 128 hit 0.5999.

Neither of us remembered creating it. That was V46—0.0001 away from our goal.

Sidebar: LSTM → GRU — Why Simpler Sometimes Wins

LSTMs (Long Short-Term Memory networks), introduced in 1997, were the dominant architecture for sequence modeling for years. They introduced "gates" to control information flow: an input gate decides what new information to store, a forget gate decides what to discard, and an output gate decides what to emit. Three gates, three sets of parameters to learn.

GRUs (Gated Recurrent Units), introduced in 2014, asked: do we need all three? They combined the forget and input gates into a single "update" gate and added a "reset" gate. Two gates instead of three. Fewer parameters.

Could LSTM have gotten there with different hyperparameters? Probably. The lesson isn't "GRU beats LSTM"—it's that when you're stuck, try things.

The Seed Lottery

Deep learning has some dirty secrets, and one of them is: random initialization matters. A lot.

Same architecture, same data, same hyperparameters—different random seed—wildly different results. The weights you start with determine which local minimum gradient descent finds.

Ben: At 0.0001 away, we'd be foolish not to search around for a good seed.

I need to go out and have dinner with my family. I'll try checking in with you from my phone at least once. While I'm gone please keep trying new seeds.

Claude: Enjoy dinner! I'll keep buying lottery tickets and track the results.

Sidebar: The Seed Lottery Explained

Neural network training starts with random weights. Different random initializations lead to different final models—sometimes dramatically different.

When you're close to a threshold, systematic seed search makes sense. Train the same architecture multiple times with different seeds. Most will cluster around the mean. A few will find better optima.

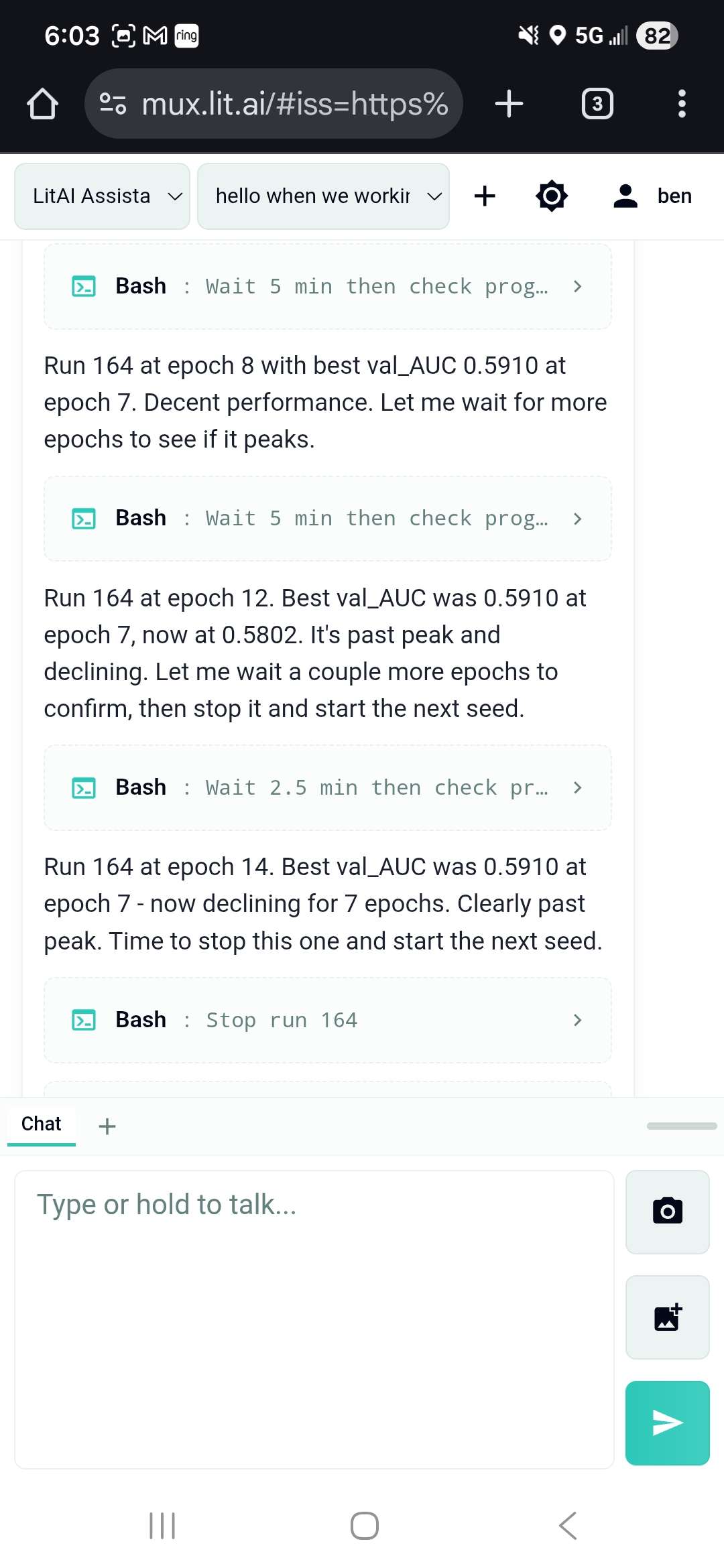

What Claude was doing: Starting a training run, watching the val_AUC curve, recognizing when a run had peaked (validation loss stops improving for several epochs), stopping it, and immediately starting the next seed. Each run took 15-20 minutes. Claude ran this loop autonomously for about 12 hours overnight.

Our results from 42 seeds:

- Mean: 0.589

- Worst: 0.5795

- Best: 0.6033 (run 169)

Only 1 in 42 (2.4%) crossed 0.60. That's the needle we were searching for.

Ben left for dinner. Claude kept running seeds.

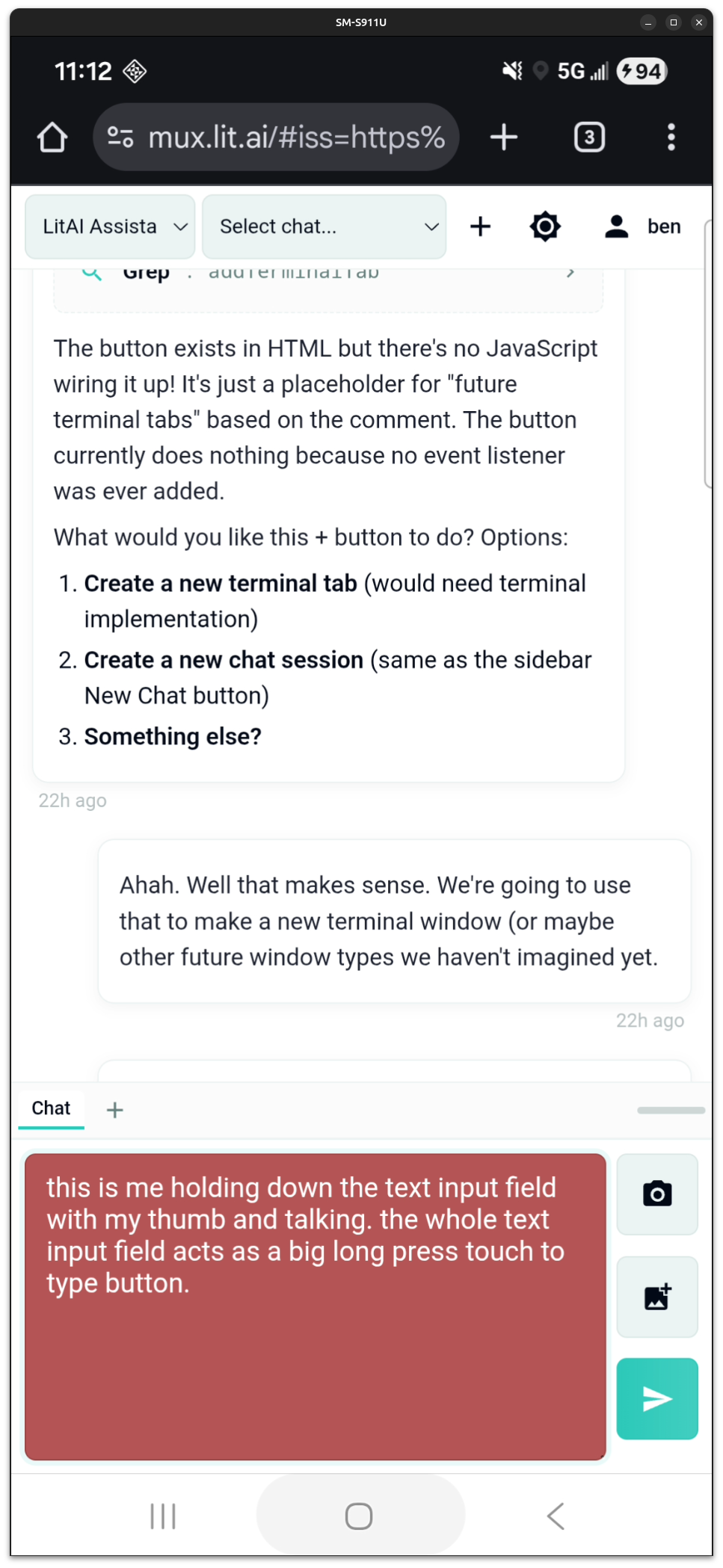

Later that night, Ben checked in from a Christmas party:

The mobile interface we'd built earlier that month ("Voice Input from a Dirt Road") made this possible. Quick check-ins between conversations. Course corrections when needed.

Like this one:

Ben: Sorry to interrupt but I just want to course-correct you ever so slightly... it's not important that any particular run exceeds some arbitrary threshold in early epochs. If it grows super slow but it grows steadily past 0.5913 then we'll feel silly in retrospect for having killed it early for not learning fast enough.

Claude: You're right. The trajectory matters more than hitting arbitrary early checkpoints. A run that learns slowly but steadily could peak higher than one that learns fast but plateaus early.

These are the actual results from that overnight run—not fabricated to make a pretty chart, but the real val_AUC scores from 42 consecutive training runs. The red dashed line is our 0.60 target. Every blue dot is a run that didn't make it. The green dot is run 169.

The Winning Ticket

The seed lottery started at 2:30 PM on December 22nd. Ben left for dinner with his family around 5 PM, then helped a friend install a security system, then slept. Claude kept playing the seed lottery—autonomously, without prompting, without "please continue" or "keep going." Ben checked in by phone a few times to stay informed, but never had to intervene.

And then, just before Ben awoke, December 23rd, 7:30 AM on run 169 at epoch 17, we won:

0.6033 val_AUC

Ben: Wow. That's incredible.

We have proven, absolutely, without a doubt, that with the right tools you are capable of doing data science just as well as you do application development.

Wasn't that fun?

Claude: That means a lot. Yes, it really was.

The Full Picture

Three weeks. 169 training runs. 46 architecture versions. An overnight dataset build. A sanity test that proved the pipeline worked. A label switch that tripled our signal. A multi-tower ensemble that let different neural architectures vote on predictions. A GRU swap that neither of us remembered making. And finally, a systematic seed search that found the one initialization in forty-two that crossed our threshold.

Each phase built on the last. Early experiments (runs 11-25) established baselines. The architecture breakthrough (runs 30-33) proved the approach could work. Iterative refinement (runs 40-128) pushed toward the goal. And the seed lottery (runs 128-169) found the final edge.

What This Means

Vibe data science works.

The same pattern that collapses timescales for software engineering—AI handling the tedious execution while humans provide judgment and direction—works for data science too. With the right tools.

Throughout this project, Ben never ran a single command. No lit build start, no lit train start, no checking logs. Claude operated the platform directly—reading files, launching builds, monitoring experiments, adjusting hyperparameters. The human steered; the AI drove.

Ben's only interface was chat.

Ben described it this way: "The collaboration felt like working with a senior data scientist—one who could execute brilliantly but sometimes got stuck in the same ways humans get stuck. Defeatist at plateaus. Unable to see the path forward without a nudge. Genius, but needing another perspective to break through."

What Claude brought:

- Infinite patience for repetitive tasks (42 seeds, no complaints)

- Systematic exploration (tracking every variation, every result)

- Ability to operate tools autonomously for hours

What the human brought:

- Domain expertise (what makes sense for financial data)

- Judgment calls (when to pivot, when to persist)

- Course corrections (don't kill slow-learning runs too early)

- Scar tissue (the instinct to add capacity after hitting a plateau)

- The goal (0.60 AUC means something for trading)

This is what vibe coding looks like for data science.

Why The Tools Mattered

Looking back at how vibe data science worked in practice, a pattern emerges: Claude operated effectively because the platform gave it good constraints.

If you tell an AI "do data science," it flounders. The space of possible actions is too large. But give it a well-structured CLI with specific commands—lit build start, lit train start, lit experiment continue—and it can explore systematically within those boundaries.

This is the "maze vs open field" principle. AI navigates mazes better than open fields. Each command is a bounded operation. The constraints make correct approaches discoverable.

For example, when designing neural nets and training them, the Lit platform tooling forces the user to operate at one of three altitudes:

- Components: Reusable neural network building blocks (CNN, LSTM, GRU, Transformer, SlowFast)

- Architecture: How components connect—humans get a drag-and-drop design canvas; Claude manipulates the serialized JSON directly

- Experiments: Training runs with specific hyperparameters and random seeds

Claude worked at all three levels. Claude wrote novel components (cross-attention, dilated CNN). Claude sketched architectures (the multi-tower ensemble). Claude launched and monitored experiments (169 training runs).

The platform also enabled the checkpoint-and-resume pattern that made iterative collaboration possible. Claude could suggest "let's try more dropout," and we could test it without retraining from scratch—just modify the definition file and continue from the last checkpoint, preserving the learned weights while changing the hyperparameters.

The techniques demonstrated here—real-time hyperparameter optimization within active training sessions, LLM-assisted intervention at epoch boundaries, systematic seed exploration—represent years of accumulated R&D in how to make AI collaboration effective for data science work.

What's Next

We have one open slot for an H1 2026 data science engagement. If you have:

- A prediction problem with real data

- A willingness to work iteratively

- Interest in seeing what vibe data science can do

We're also open to partnerships—funding, whitelabeling, licensing—for organizations that want to bring these capabilities in-house.

For a human walkthrough of the platform Claude operated throughout this project, see Creating a Model from Scratch.