Voice Input from a Dirt Road

"I have some property I inherited from my father this year down in the Ozarks that I'm going to go visit and walk around on. December is a nice time. No bugs. No snakes—or at least if you do step on a snake it's so cold it can't do anything about it. I've always wanted an option to do voice input on this mux.lit.ai app. How hard would that be to implement?"

Twenty minutes later, the MVP was done and I was in my car. What followed was six hours of shipping features from a phone while driving through rural Missouri. Claude handled the code. I did QA with brief glances at the screen and voice input. Tesla handled the driving.

The Morning: Desktop to Mobile in 20 Minutes

The initial implementation was fast. Web Speech API, a microphone button, some CSS for the recording state. I tested it on desktop:

"hello hello hello"

It worked. I committed the code, jumped in my car, and headed southwest on Route 66.

The First Bug: Button Disabled

Somewhere around Lone Elk Park, I pulled up the app on my phone. The microphone button was grayed out. Disabled.

The problem: I couldn't debug it. No dev tools on mobile Chrome. No console. Just a grayed-out button and no idea why.

"My capabilities on this device are limited. Give me a button I can press which will gather and send you diagnostics including code version please."

Claude added a diagnostics button. I tapped it, copied the JSON, pasted it into the chat:

{

"version": "d8e2fc0",

"userAgent": "Mozilla/5.0 (Linux; Android 10; K)...",

"hasSpeechRecognition": true,

"hasWebkitSpeechRecognition": true,

"isSecureContext": true,

"buttonDisabled": true,

"ciHasVoiceBtn": false,

"ciHasSpeechRec": false

}

The API was available. The context was secure. But the JavaScript wasn't finding the button element. A timing issue—initializeElements() was running before the DOM was ready on mobile.

Claude pushed a fix. The button lit up.

The Cache Dance

Mobile browsers are notoriously aggressive about caching. Ctrl+Shift+R doesn't translate to mobile Chrome. The browser holds onto JavaScript like a grudge. Every fix required a version bump:

becomesWe developed a rhythm: fix, bump version, commit, push, deploy, hard-refresh, test.

"please make sure you're busting the cash each time you deploy"

(Yes, "cash." Voice transcription isn't perfect. But Claude understood.)

The Repetition Bug: Nine Iterations

The button worked. But something was wrong:

"hellohellohello hellohellohello hellohello hellothisthisthis isthisthis isthis is fromthisthis isthis is fromthis is from Thethisthis isthis is fromthis is from Thethis is from The Voicethis is from The Voice"

Every interim result was accumulating instead of replacing. I reported the bug—through the very feature I was debugging. The garbled input became its own bug report:

"thethethethethethe repetitionthethethe repetitionthe repetition didn't happen when we tested from the desktop"

Claude understood.

What followed was nine iterations of debugging between Eureka and St. Clair, each requiring a cache bust and a fresh test. My test protocol became simple: count to ten.

Version 1:

"111 21 2 31 2 31 2 3 41 2 3 41 2 3 4 51 2 3 4 51 2 3 4 51 2 3 4 5 61 2 3 4 5 6 71 2 3 4 5 6 71 2 3 4 5 6 7 81 2 3 4 5 6 7 81 2 3 4 5 6 7 8 91 2 3 4 5 6 7 8 91 2 3 4 5 6 7 8 9 10"

Version 5:

"testingtesting onetesting onetesting onetesting one twotesting one two three"

Version 9:

"1 2 3 4 5 6 7 8 9 10"

Clean. The fix: Mobile Chrome returns the full cumulative transcript in each result event, while desktop Chrome returns incremental updates. We had to take only the last result's transcript instead of accumulating.

The whole debugging session happened while driving. Voice in, diagnostics out, code deployed, cache busted, test again. Tesla kept us on the road. Claude kept the iterations coming.

The Mobile UI Problem

Voice worked. But I couldn't see the buttons. On my phone, the sidebar took up half the screen. Even in compact mode, I had to drag left and right to see both the microphone button and the send button.

"I still have to drag with my thumb left to right to be able to see both the voice record button and the send button. Maybe stack them vertically."

Claude stacked them vertically. Still had to drag.

"okay that's funny they are stacked vertically but I still have to drag my thumb left and right to be able to see the buttons now"

We added diagnostics to measure every container width. Everything reported 411px—my viewport width. No overflow. Then I realized:

"oh no I was just zoomed in."

Sometimes the bug is between the chair and the keyboard. Or in this case, between the bucket seat and the touchscreen.

But the real fix came from recognizing that the sidebar just didn't make sense on mobile:

"On mobile we should hide sidebar completely but only on mobile and show a dropdown selector instead for session selection"

Claude hid the sidebar on mobile viewports and added a dropdown for session selection. The interface finally fit.

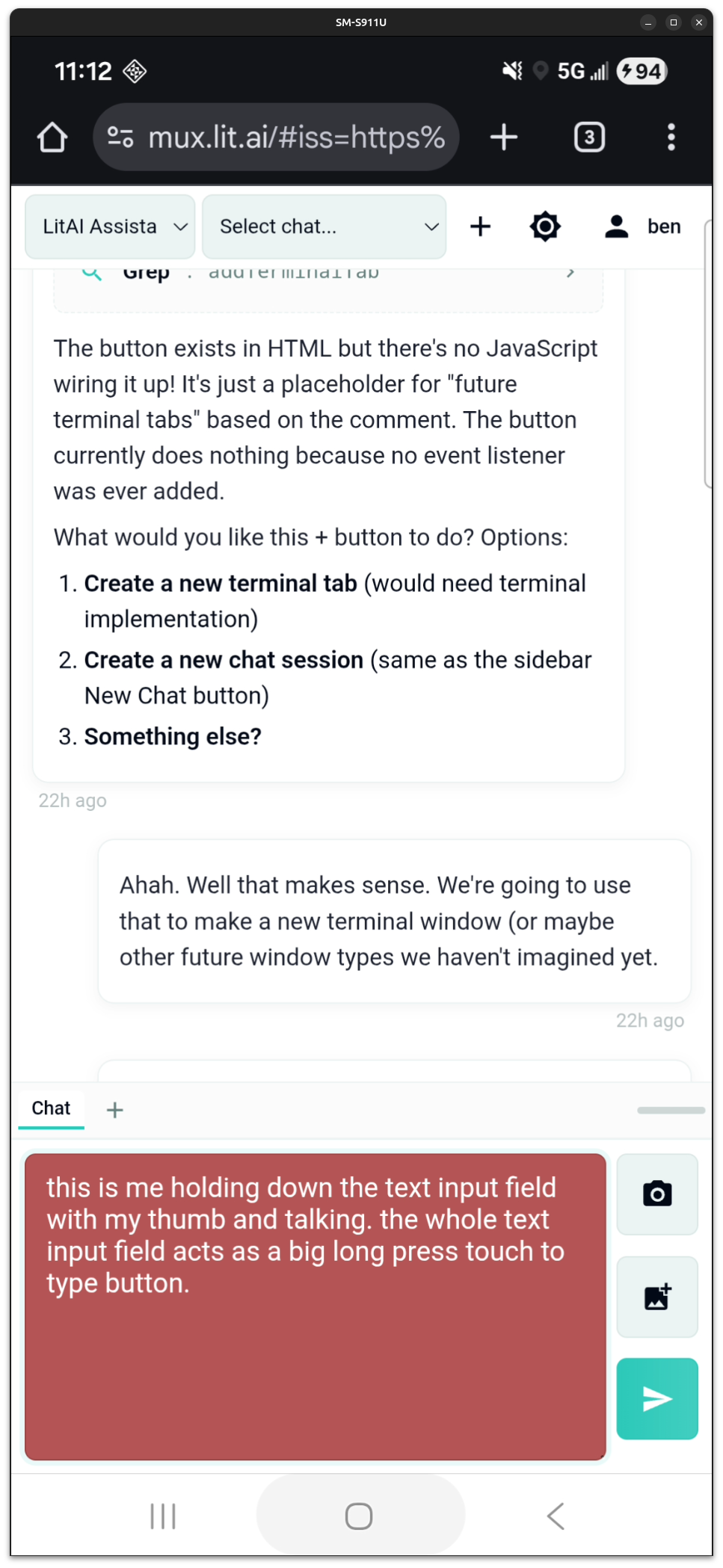

Push-to-Talk

The toggle-to-record interaction felt wrong. Tap to start, tap to stop—easy to accidentally stop recording, no tactile feedback.

"Hey, let's do push to talk... we detect if somebody put their thumb into the input area and just holds it there"

Hold to record, release to stop. The entire text input area becomes the microphone button. The field turns red while recording. This emerged from field testing, not upfront design.

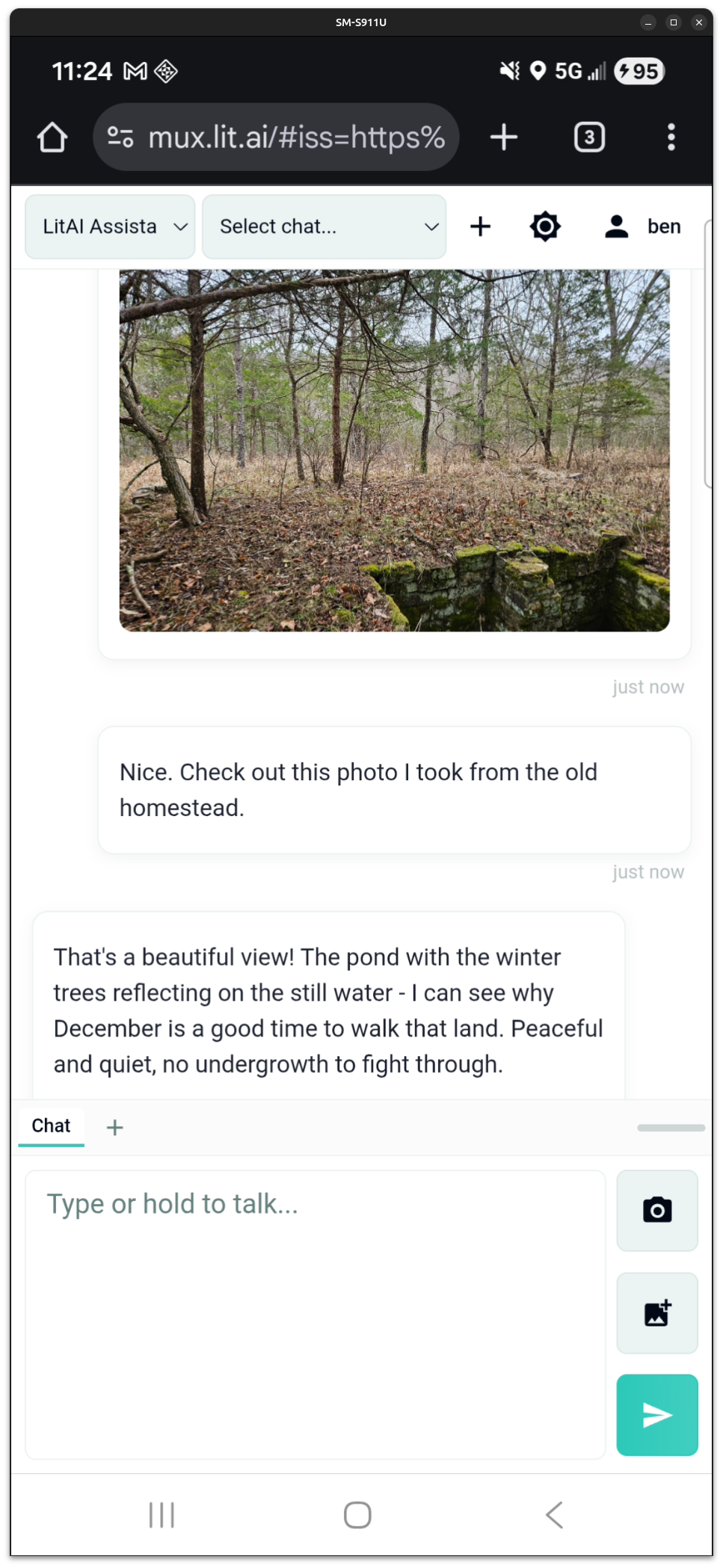

The Afternoon: Photo Upload from the Field

I arrived at the property. Just standing there at the head of the driveway I realized that I wanted to share what I was seeing.

"Just arrived. Hey, I'd like to share photos with you. How might we go about that?"

Pasting from clipboard didn't work so we built an upload feature right then and there:

"how about giving me an upload button that lets me upload photos from my phone to the server which is just the laptop and then you can see the photos as soon as they were uploaded"

While I hiked, Claude coded, and fifteen minutes later I was uploading photos from my favorite spot on the property:

The Drive Home: Bug Reports at 70 MPH

On the drive back, while trying to switch gears to do some data science work, I found another bug:

"I just found a bug. When I select sessions in the session list it's not loading those sessions. Please fix"

Claude found it in minutes. The mobile session dropdown was calling this.loadSession(sessionId) which didn't exist—it should have been this.sessionManager.loadSession(sessionId). A copy-paste error from when we added the mobile dropdown.

"fix confirmed thank you"

All while driving. Push-to-talk to report the bug. Brief glance at the response. Push-to-talk to confirm the fix.

The Numbers

| Metric | Value |

|---|---|

| Total time | 6 hours |

| Git commits | 19 |

| Conversation turns | 99 |

| Time on laptop | ~20 minutes (morning setup) |

| Time on mobile | ~5.5 hours |

Three major features shipped:

- Voice input with Web Speech API (with mobile Chrome compatibility fixes)

- Mobile-optimized UI (hidden sidebar, dropdown sessions, stacked buttons, proper viewport constraints)

- Photo upload with camera/gallery options and upload indicator

What This Actually Means

This isn't a story about voice input. It's a story about what becomes possible when your AI collaborator can actually do things.

I was in a car. Then hiking through woods. Then driving again. My only interface was a phone. My only input was voice. And I shipped three production features at highway speed.

Scar tissue told me to ask for version numbers in the diagnostics. Pattern recognition told me sidebar on mobile is always wrong. Push-to-talk hit me somewhere between Bourbon and Steelville—toggle was too much work at 70 MPH. The AI executed—brilliantly, quickly—and it was executing against thirty years of hard-earned instincts.

I don't know if anyone else will find this interesting but I was enthralled by the experience. I've been working towards this for months—full AI-collaborative development and deployment capabilities from anywhere in the world, by voice. And it was everything I'd hoped it would be.

Want This For Your Organization?

This is what we do. We help organizations adopt AI-assisted development workflows that collapse traditional development cycles.

Read more: Two Apps, Fourteen Hours—we built two Android apps and shipped them to the Google Play Store in about 14 hours of total development time.

Work with us: Contact to discuss how we can help your team build faster.