"AI" is a neat concept, and it is a concept that grows more exciting and complex every single day. Literally, if you work with AI, and fell asleep for a week, you would end up "behind the curve". This document is meant to be a gentle introduction to what AI is, how it works, and if you're interested-- how to get involved. Also, this document is 100% human written and sourced. I personally have reviewed the source material myself to make sure that it is APPLICABLE and ACCURATE.

The reason why I do this, and why it is important to have a human-written digest of what AI is, is because when you source information from an AI, there is a chance that you can get back information that doesn't apply, isn't accurate, or in some cases, AI can create entire source material that doesn't even exist. (And we'll talk about hallucinations later, or if it really interests you, click that link to go there now.)

But come back! There's lots of important material here that will give you an idea of how AI can behave and what you can do to avoid the pitfalls. Understanding AI helps to frame their behavior so that you know what to expect.

It's really hard to pinpoint the exact genesis of the idea of AI, but it has been around roughly as long as computing. The idea of directing a computer to think like a human, or internalize a strict set of rules and behave by those rules has probably been around since the "early 1900s" but Alan Turing theorized that it was possible to create artificial intelligence in about 1935. The idea really took off from there and the idea (and industry) began to really blossom in the 1950s with the release of his paper "Computing Machinery and Intelligence"-- in this paper, Turing unveiled the idea of the "Turing Test". It was at this point that people really began to think it was possible to make a machine that not only exhibited intelligence, but was able to trick a human interactor more than 50% of the time. As you probably have already imagined, this idea, born in 1950, still reverberates until this very day in AI, and is something data scientists already consider when creating or training an AI.

The crucially limiting problem was that machines of the time never stored any information. They would read in giant stacks of cards with the programming and data on it and perform operations as the data was moving through the computer. How can you create an AI that can modify it's own behavior or be trained if it cannot "remember" what has happened in the past? Second to that was the immense expense of running a computer able to perform the most basic of operations-- on the order of $200,000 per month in 50s bucks. That's a staggering expense, but data scientists of the time were not dissuaded. The industry of computing was moving so fast that a solution was guaranteed to make itself seen.

In 1955, The RAND Corporation introduced a program called "Logic Theorist". It was designed to closely mimic the behavior of a human by proving mathematical theorems better than what humans could do, and faster. It was able to solve 38 out of 52 formulas from a "Principia Mathematica" written by part of the team that worked on Logic Theorist. It is largely considered the first actual application of Artificial Intelligence on a real computer at a real scale. It introduced the ideas of "Heuristic Programming", AI, and "Information Processing Language". If you want to learn all there is to know about "Logic Theorist", tap on this link to leave the site and see the most complete archive of this work: Logic Theorist

We're just getting started and 3 new fields of study have been created and a program written to demonstrate that AI can be accomplished. This is probably going to be a "living document", meaning that it will grow and change over time. There's just so much to write, and so little time to educate everyone. But look... buckle up and we'll keep it as low-key as we can because AI is likely the most complex and growing field of study that humans now have. There are so many threads to follow and so many things to try-- but as you'll see later, we're very held back by the amount of computing power that an individual or even a corporation can get their hands on! Today, in late 2024, if you wanted to get properly started in the field of AI, the investment can slide in at $200,000.... what it used to cost for a month of computing time in 1950.

There are amazing things that committed data scientist can do to assemble a workable system for a lot less, but as you can imagine, the tradeoff is computing speed and response time of a particular model.

So the late 50's through the mid 70's, work on AI was growing at an amazing pace because computers finally had the option to store data (starting in the late 50's) which was integral to AI growing. Being able to store results and react to a changing environment was obviously a game-changer. Progress now was never going to stop. The gentlemen that created Logic Theorist, not to be stopped, released "General Problem Solver" and Joseph Weizenbaum released "ELIZA" then Kenneth Colby released "PARRY" which was described by the scientist as being "ELIZA, but with attitude". That was in 1972. Then "DARPA" got involved and decided to start funding the future of AI... but in the mid 70s, data scientists were already starting to figure out that in many cases, the overwhelming need for computing power was always going to be a sticking point-- computers simply didn't have the storage or computing power to digest the mountains of data. DARPA began to realize that they were putting a lot of money into a science that still needed technology to keep up, and the funding dwindled as we arrive in the 80's.

Scientists in the 80s were able to enhance the algorithmic toolkit they used to try to mimic human intelligence, and as it was optimized and began running faster on the newer emerging hardware. Corporations and government entities again turned on the funding hose and work on AI accelerated again. Edward Feigenbaum introduced the application "expert systems" which leveraged computational power to mirror the decision-making processes of a human.

It was astounding, as expert systems would directly ingest answers from an expert on a topic and could then help other users find those accurate answers. Non-experts could be quickly educated by the AI and the answers of a topic specialist can be quickly given to many non-experts in a 1 to Many style.

Even the Japanese government was involved with expert systems and the Japanese then deeply funded projects like expert systems to the order of $400 million 1980s bucks on their "Fifth Generation Computer Project" colloquially known as FGCP. This project was funded from 1982 to 1990.

The moon shot goals of the FGCP weren't met, but the result was more scientists in the industry-- nevertheless, AI was no longer "in", but the science wasn't finished. AI thrived in the 90s and 2000's despite the lack of significant funding simply due to the dedicated scientists who really believed they could make AI a reality.

For example, Gary Kasparov, a world-renowned chess master, played a series of games against IBM's Deep Blue, an AI model designed to ONLY play chess. Gary lost, and AI regained the focus of the people who wrote the checks.

"Dragon Simply Speaking" was a voice-to-text software that could be used to live dictate notes or whole pages or chapters of a book. Simply Speaking used a primitive form of AI to determine the likely word you are trying to say-- which dramatically increased its efficiency when working with persons with speed impediments, and this software is beloved to this day.

Cynthia Breazeal introduced "Kismet", a robot that used AI to understand and simulate human emotions. Even in the 1990s toys where the target of AI. I used to own a great toy called "20Q". It was a small ball that could fit in the palm of your hand. It had buttons for YES and NO and SELECT. The point of the game is.... 20 questions. The AI in the game was tasked with asking you 20 questions to determine an item you were thinking about... and it was EERILY accurate. I had games last 3 questions.... and also games that last 25. If the AI can't figure it out in 20, it would kindly assist itself by adding on more questions to figure out your word.

Alpha Go was developed by google to play a far more challenging game against a world-champion Go player. The game of Go is outside the scope of this document, but if you want to appreciate how monumental it was that Alpha Go was able to beat a human player, go learn about Go and then come back and enjoy our content about Alpha Go.

Of course there's so much stuff in the middle that I missed and people I didn't recognize for their monumental contributions to the field of Artificial Intelligence.... but the great thing about this document is that you can always come back to focus on something you would like to know about or something you missed. I will do my best to include the most accurate data.

TODAY we live in an age where AI touches every part of the world around us. Telephone calls are made and answered by AI, AI that understands your frustration by the tone of your voice. AI that tries to resolve that frustration. You may not even need to talk to a human now with a properly trained and configured model to handle the calls. I once encountered a complex problem with my taxes and asked the AI what to do and it immediately recognized my problem and told me who to call and what to change to make my taxes legal-- and I have to say, I was impressed. A problem I thought might take hours and up to 10 contacts was resolved without a phone call. This is the power of AI.

If you feel like this is moving really fast-- that's the field of AI. Have a break. Have some water and some toast. Then come back and I'll start teaching you some of the terminology and standard knowledge for working in AI. If there is something missing or incorrect, I always want to know. In most cases you won't even have to cite the source of my error. Just tell me where it is and I'll do the lifting for everyone after that.

When data scientists talk, they will reference a lot of common things all the time. One of them you may have already encountered in my sprawling explanations here:

MODEL - A model is the programming, data, and analysis that makes an AI work and make decisions with little to no human intervention.

DATA SCIENCE - These are the methods and practices utilized to implement machine learning techniques and analytics applied by subject matter experts to provide model insights that lead to increased efficiency of a model.

MACHINE LEARNING - This is the practice of using the appropriate algorithms to "learn" from massive amounts of data. The AI is able to absorb this corpus and extract patterns from the data that may be of use to the user.

CORPUS - Corpus is the word that is commonly used to describe a "group" of data that is to be ingested to be used by the model. A corpus can contain any type of data as long as the model is able to recognize the data.

This is a great question. This is a good question because it recognizes that not all AI is created the same. Not all AI are capable of doing the same work. So what is there? Well, let me lead you through today's most popular types of AI.

There are two general categories that a system would fall under. The first is GOALS which is AI systems that are created and trained for the specific outcome (Goal). The second is TECHNIQUES-- a way of training or teaching a computer to respond to input as though they were human... to replicate human intelligence. It's pretty easy (usually) to categorize an AI under those two categories, but let's see how I do.

This is a pretty exciting and interesting field of AI. In this type of AI, we train a neural network algorithm to generate or analyze images and image data. We want to help computers learn to "see" objects, even in strange circumstances. We want computers to recognize objects in images and tell us what they are. This is a precursor to real-time vision processing like what an AI robot would need to navigate our complex world.

This is one of my favorite types of AI. You actually end up training 2 AI and telling them "when you disagree, you need to argue with the other AI until both of you agree on an answer. It's basically small-scale computer warfare. So one of the Neural Networks is a generator and the other is the discriminator. If you say "Give me a photo of a gopher in a crossing guard uniform" The generator is going to generate the photos of the gopher and present it to the discriminator to say "does this look good to you?" if not, the discriminator directs the generator to "Do it again. Better this time." These networks are commonly used by large scale image generation sites / software like Photoshop's AI tool and the software "Stable Diffusion" that you can run yourself!

With machine learning, we can use algorithms that are capable of ingesting large amounts of data at once and perform tasks like text or image generation, classification (of many types), and prediction. If you are involved in Machine Learning (ML) you can do a couple types of learning. Unsupervised and Supervised. For supervised learning, you ALSO have to basically ingest that same amount of data because in Supervised ML, you must repeatedly tell the AI what the correct classification of items is until the AI finally learns how to do it alone.

In this type of AI, we used neural network algorithms to look at text data. We can feed massive "corpus" of text to AI that can read and understand the context of the documents we send. NLP is used by most of the AI you have used in the last few years: Microsoft Copilot, ChatGPT, etc. However, using these examples often combine the NLP and Computer Vision models in combination with each other.

This AI is trained where the containers to process information are set up to mimic the human brain. And while Neural Networks are the spark that starts up many AI methods, they require exquisitely large amounts of information and computing power and resources and therefore aren't recommended for projects that can be accomplished more easily with a different type of AI.

Deep learning utilizes several different philosophies and the term actually itself covers a lot of territory, looping in other disciplines. It's machine learning that is done by neural networks. Your models can be either 'shallow' or 'deep' and can contain 1 to MANY layers. A model with more layers would be deeper and thus require more computational effort.

Reinforcement Learning uses a system of penalties and rewards in order to train the system. For example, let's say we have an oval track and the objective is for the car to drive itself around the track as fast as possible. We give the car an accelerator and a brake and a steering system as well as a rudimentary gear system containing Park, Reverse, and Drive. Now once we have set this all up we tell the trainer "Hey you need to keep that car on the center line and go around the track as quickly as possible. If you deviate from the center line, you will lose 40 points per meter you have deviated from the center line. However, keep the car on the center line (the center line is somewhere underneath the car as it drives) and we'll give you 100 points per meter. Now you turn this AI loose to train itself. If you watch, the cars start by acting just insane... reverse at full throttle and then push it into park, running into walls and gardens... but over time, the AI learns the juicy secret to keeping those points... drive forward, drive fast, keep the car on the line. Soon you will notice cars almost in perfect synchronicity as they move speedily around the track. That's reinforcement learning. Especially in the field of self-driving cars, the reinforcement learning very often will reach out to humans to obtain the correct answer. This human feedback is simply called "Reinforcement Learning Human Feedback" or RLHF.

This is the holy grail of data scientists everywhere. If a model and AI are able to reason, think, perceive, and react-- it is then commonly known as AGI. Data scientists are working towards a goal where AGI allows an AI to reason well enough to create it's own solutions to problems from the available data. This should all be done without human intervention. AGI is also a very hot topic because there are people out there that believe that once we achieve AGI, it will not be long before the AI rules or destroys us. There are people who believe that AGI is already real and being contained by OpenAI, the company that offers ChatGPT, the most advanced AI available to the public. You can easily imagine this to be the case when you get eerily accurate responses from ChatGPT, almost like you're talking to a friend that's really ravenous about getting information for you!

Alan Turing, the Father of AI, was an English Mathematician and computer scientist. His work on the Enigma machine that broke the German naval codes is credited with shortening World War II by several years. It took him 6 months to deliver this amazing feat.

After the war, Turing began working at the National Physics Laboratory where he designed and built the Automatic Computing Engine in 1948, and it is credited as one of the first designs for a stored-program computer, a computer where you can feed it a string of instructions at once (like a program) instead of one instruction at a time which was not stored for use in a later program or instruction.

Later, Turing had a problem because the Official Secrets Act forbade him from talking about the Automatic Computing Engine, or even explaining the basis of his analysis about how the machine might work, which resulted in delays staring the ACE project and so in 1947 he took a sabbatical year-- a year which resulted in the work "Intelligent Machinery", which was seminal, but not published in his lifetime. Also during his sabbatical, the Pilot ACE was being built in absentia, and executed its first program on May 10, 1950. The full version of the ACE was not built until after Turing's death by suicide in 1954.

In 1951, Turing began work in mathematical biology-- work that Marvin Minsky was involved in. Turing published what many call his "masterpiece", "The Chemical Basis of Morphogenesis" and January 1952. He was interested in how patterns and shapes developed in biological organisms. His work led to complex calculations that had to be solved by hand because of the lack of powerful computers at the time which could have quickly handled his work.

Even though "The Chemical Basis of Morphogenesis" was published before the structure of DNA was fully understood, Turing's morphogenesis work is still to this day considered his seminal work in mathematical biology. His understanding of morphogenesis has been relevant all the way to a 2023 study about the growth of chia seeds.

It was Turing's 1950 paper asking if it was possible for a machine to think, and the development of a test to answer that question, that solidifies Turing's spot in the field of artificial intelligence.

The phrase "Turing Test" is more broadly used when referring to certain kinds of behavioral tests designed by humans to test for presence of mind, thought, or simple intelligence. Philosophically, this idea goes, in part, back to Descartes' Discourse on the Method, back further to even the 1669 writings of the Cartesian de Cordemoy. There is evidence that Turing had already read on Descartes' language test when he wrote the paper that changed the trajectory of mechanical thinking in 1950 with his paper "Computing Machinery and Intelligence" which introduces to us the idea that a machine may be able to exhibit some intelligent behavior that is equivalent or indistinguishable from a human participant..

We certainly can't talk about AI without talking about the Turing Test, a proposal made by Alan Turing in 1950 that was a way to deal with the question "Can machines think?" And even Turing, the father of the Turing test thought the pursuit of the question "too meaningless" to deserve discussion.

However, Turing considered a related position concerning whether a machine could do well at what he called the "Imitation Game". Then from Turing's perspective, we have a philosophical question worth considering.

We could write whole chapters just on Turing himself and the philosophy of the test. There are loads of published works that go far beyond what I could discuss here, but a simple search will bring a vast inventory for you to observe.

The test is a game, and the game is a machine, a person, and an interrogator. An interrogator will ask questions to the person and the machine. For a machine to "pass" it must imitate people in such a way that 70 percent of the time, with 5 minutes of questioning, an interrogator will fail to identify that they are talking to a machine. That's the simple explanation. It gets far more extreme and intense than that. Turing was a genius and thought of a lot of things that laypersons simply would not.

Here's another question: The Turing Test is essentially a chatbot trained to respond in a certain way to the questions and statements we pose to it. There are many kinds of chatbots created to pass the test. The question: Are these machines thinking or are they just really good at assembling responses to our inputs?

By the end of the 20th century, machines were still by and large were far below the standards Turing imagined. Humans are complex and we have complex challenge-response language that often requires real knowledge and machines often just couldn't cut the mustard.

A barrage of objections to Turing's theories were lobbed and Turing's discussions of the objections were complete and thoughtful. It is far beyond the context and scope of this document to talk about all of the contributions that Turing made to the field of AI through the careful handling of these objections.

You can look up the objections and answers using the information below:

- The 'Theological' Objection

- The 'Heads in the Sand' Objection

- The 'Mathematical' Objection

- The Argument from Consciousness

- Arguments from Various Disabilities

- Lady Lovelace's Objection

- Argument from Continuity of the Nervous System

- Argument from Informality of Behavior

- Argument from Extra-Sensory Perception

These are amazing-- and to me particularly, the Arguments from Various Disabilities is the most poignant. It is an argument about how a computer may never be able to assess or purposefully exhibit beauty, kindness, resourcefulness, friendliness, have its own ambition and initiative, have a true sense of humor, and more. It is one of the most solid objections to thinking machines. It is a philosophical conundrum to this very day.

Frank Rosenblatt, the Father of AI, was an American psychologist who is primarily notable in the field of AI, and is sometimes called the "father of deep learning" as he was the pioneer in the field of artificial neural networks.

For his PhD thesis, Rosenblatt designed and built a custom computer, the Electronic Profile Analyzing Computer or the EPAC, whose design was to perform "multidimensional analysis" for psychometrics. Multidimensional analysis, in its simplest form is the computation of data in two or more categories. Race speeds of drag cars over the right and left lanes over multiple years of races would be data that could use multidimensional analysis. It is possible to have datasets that extend into higher dimensions, which increases the computational complexity.

Rosenblatt was likely most regarded for the Perceptron, which was a device built in 1957 that was built on biological principles and showed an ability to learn from its previous runs. The program ran on a computer that had an "eye" and when a triangle was held in front of the "eye", it would send the image along a random succession of lines to "response units", where the image of the triangle was registered in memory. Then entire process was simulated on an IBM 704 system.

The perceptron was used by the US National Photographic Interpretation Center to develop a useful algorithm that could ease the burden on human photo interpreters.

The Mark I Perceptron, running on the IBM 704, had 3 layers. One version of the Mark I was as follows:

- An array of 400 photocells which were arranged in a grid, 20x20, which were named "sensory units", S-Units, or "input retina" Each S-unity can connect to up to 40 A-Units.

- A hidden layer of 512 perceptrons which were called "association units" or "A-Units"

- An output later of 8 perceptrons, which were called "response units" or "R-Units"

The S-Units are algorithmically and randomly assigned to an A-Unit with a plugboard, meant to eliminate any particular intentional bias in the perceptron. Connection weights are fixed and not learned. Rosenblatt designed the machine to closely imitate human visual perception.

The perceptron was held up by the Navy, who expected that soon the perceptron would be able to walk, talk, see, write and reproduce itself and also to perform the apex of AI, be conscious of its own existence. The CIA would use the Perceptron to recognize militarily interesting photographs for 4 years from 1960 to 1964. However, the device itself profed that perceptrons could not recognize many classes of patterns. This caused research in the area of neural networks to slow to a stagnate crawl for years until AI scientists discovered that feed-forward neural networks or multilayer perceptrons had greater power to recognize images than a single-layer approach.

To completely explain the perceptron would require a PhD in mathematics, but the idea of the perceptron unlocked weighted products, bias, multiple inputs, and the idea of the artificial neuron. Perceptrons, as an idea, have expanded into the core of AI, far further than Rosenblatt could have ever imagined, and the field of the perceptron is awash in mathematics in the modern era.

John McCarthy, the Father of AI, was a computer scientist and cognitive scientist. He is regarded as one of the founders of the discipline we call artificial intelligence.

John McCarthy was the co-author of a document that coined the term "artificial intelligence". He was the developer of the computer programming language LISP.

He popularized computer time-sharing, a system where many programs can run at once why sharing slices of time between each program. This also allowed multi-user environments where scientists, students, or enthusiasts could run programs and experiments without scheduling time with the system operator.

John McCarthy invented "garbage collection" which is a system by which a program will determine data that is no longer needed for operations and can be cleared from memory. If a large chunk of memory is allocated to the program, and it is no longer needed, the memory can be freed by a garbage collection routine, freeing programmers and operators from the nasty task of manual memory management.

John McCarthy is one of the "founding fathers" of AI, but he is in a group of rare company with Marvin Minsky, Allen Newell, Herbert Simon, and Alan Turing. The coining of the term "artificial intelligence" was in a proposal that was written by McCarthy, Minsky, Nathaniel Rochester, and Claude Shannon for Dartmouth conference in 1956, where AI was started as an actual field in computing.

In 1958 McCarthy proposed the "Advice Taker" which was a hypothetical computer program devised by McCarthy that would use logic to represent the information in a computer and not just as subject matter from another program. This paper may have also been the very first to propose common sense reasoning ability as the key to AI. This proposal is still being evaluated today.

Later work inspired by advice taker was work on question answering and logic programming, but time sharing systems are the most illuminated example of a legacy because every computer in use today uses some sort of time-sharing system to run all of the programs that we have running at once. Imagine if we could only run ONE tab in a browser... only the browser and no music running at the same time. We could only get our notifications for all of our social media if we stop what we are doing and run the social media app to give it all of the computer time. Computing would be a nightmare!

In 1966, at Stanford, McCarthy and his team wrote a program that was used to play a few chess games with counterparts in what was then known as the Soviet Union. The program lost two games and drew two games.

In 1979, McCarthy wrote an article to Usenet called "Ascribing Mental Qualities to Machines", where he wrote "Machines as simple as thermostats can be said to have beliefs, and having beliefs seems to be a characteristic of most machines capable of problem-solving performance."

In 1980 John Searle responded to McCarthy saying that machines cannot have beliefs because they are not conscious, and that machines lack "intentionality", which is the mental ability to refer to or represent something-- the ability of one's mind to create representations of something that may or may not be complete. It is a philosophical concept applied to machines.

Minsky, the Father of AI, is credited with helping to create today's vision of Artificial Intelligence. Following Minsky's Navy service from 1944 to 1945, he enrolled in Harvard University in 1946, where he was free to explore his intellectual interests to their fullest and in that vein, he completed research in physics, neurophysiology, and psychology. He graduated with honors in mathematics in 1950. He was truly a busy guy with his finger in a lot of pies!

Not content, he enrolled in Princeton University in 1951 and while there he built the world's first neural network simulator. After earning his doctorate in mathematics at Princeton, Minsky returned to Harvard in 1954. In 1955, Minsky invented the confocal scanning microscope.

Marvin had fire, and in 1957 Minsky moved to the Massachusetts Institute of Technology in order to pursue his interest in modeling and understanding human thought using machines.

Minsky and others at MIT who were interested in AI such as John McCarthy, and MID professor of Electrical Engineering, and the creator and developer of the LISP programming language. McCarthy contributed to the development of time-sharing on computers, a method where multiple programs were given small slices of time very quickly to accomplish their tasks. This made it appear that the computer was doing several things at once. This allowed multiple users to connect to one system to get work done without having to schedule the time manually to users.

In 1959 Minsky and McCarthy joined forces and cofounded the Artificial Intelligence Project. It quickly became ground zero for research in the nascent field of Artificial Intelligence. Soon the Artificial Intelligence Project was renamed to the MIT Computer Sciences and Artificial Intelligence Laboratory. Catchy, eh? Those in the know called it CSAIL, which was a lot easier to pronounce and write.

Minsky finally found a home at MIT and stayed there for the rest of his career.

Minsky had a definition of AI, "the science of making machines do things that would require intelligence if done by men.", but AI researchers found it hard to catch that lightning in a bottle, finding it extraordinarily difficult to capture the essence of the entire world in the syntax of computers of the day. Even the most powerful computers in the world, and the most powerful languages to run them.

In 1975, Minsky came up with the concept of "frames" to capture the precise information that must be programmed into a computer before offering more specific direction. For example, to capture our world, a computer must understand the concept of doors, that doors may be locked. They may swing only in one direction, or both. Doors may slide, either direction or up or even down. A door may or may not have a knob that may turn one direction, the other, or both. So in a frame, doors are described in a way that an artificial neural network may understand. Now, we should be able to tell an AI how to navigate a simple set of connected rooms.

Minsky expanded this view when he wrote "The Society of the Mind" in 1985. He proposed that the mind was composed of many individual agents performing basic functions such as telling the body when it is hungry, comparing two boxes of macaroni at the store for weight, nutrition, and price. The criticism, however is that the "Society of the Mind" is not useful to AI researchers and is useful only for the enlightenment of the AI laypersons.

Minsky wrote other books, all the way until 2006 which all contained theories regarding higher-level emotions.

To avoid typing the name over and over again, I'm going to use JVN to signify John Von Neumann, the Father of AI, because I'm only human and the repetition of his name may drive me mad.

JVN was the person who pioneered many of the foundations of what makes a modern computer such as the idea of RAM (which JVN posited could be the abstraction of the idea of the human brain) and what later became long term storage or long-term memory with what later became the hard drive.

The first hard drive I ever worked with was a 5MB hard drive in a box that was 1 meter cubed and rattled like a bucket with a bunch of bolts in it, but it drove production in our hospital and was important to our organization.

JVN wasn't done with just RAM and hard drives, he wanted to make sure that his name was cemented in computing history by also drafted the theoretical model for what is now known as a CPU in his 1945 paper "First Draft of a Report on the EDVAC."

Alan Turing studied under JVN at Princeton, so it was no wonder that Turing was excited to continue JVNs work.

JVN came up with the idea of a 'universal constructor', a self-replicating machine whose job it would be to construct other machines, which was based on JVN's work in the theories of cellular automata, developed by JVN in the early 1940's.

Cellular automata are models (in the mathematical sense) that are designed to simulate behaviors in complex systems. They do this by breaking down the systems into simple components that are discreet and easy to predict. Of course this goes even deeper. These men were not clowns.

JVN was a Hungarian-born American and a mathematician, physicist, computer scientist, and "father of AI" to some. He made huge contributions to the fields of set theory and the emerging game theory along with development of a method for solving the linear equations that are now known as the "QR algorithm", which is still used today in numerical analysis.

JVN did work on human memory, theorizing an explanation on how our human brains can store or retrieve information, and according to his theories, memories are stored in the neurons of the brain as patterns of electricity which can be stored and retrieved with a mathematical algorithm.

For those who work in or have studied AI, you probably know where I'm going here, but if you don't... keep reading.

JVN proposed the "learning machine" which, as the name described, is a machine designed to improve over time by learning from various inputs including human intervention.

JVN's contribution to mathematics and computing are more staggering than I can possibly give him credit for.

As time went on JVN developed the Technological Singularity Hypothesis which describes a process by which ever-accelerating technology reaches a point of no return which changes the mode of human life to one where there is little difference between man and machine.

The theory of cellular automata influences AI to this day and is the most common approach to the research of self-replicating and self-teaching machines.

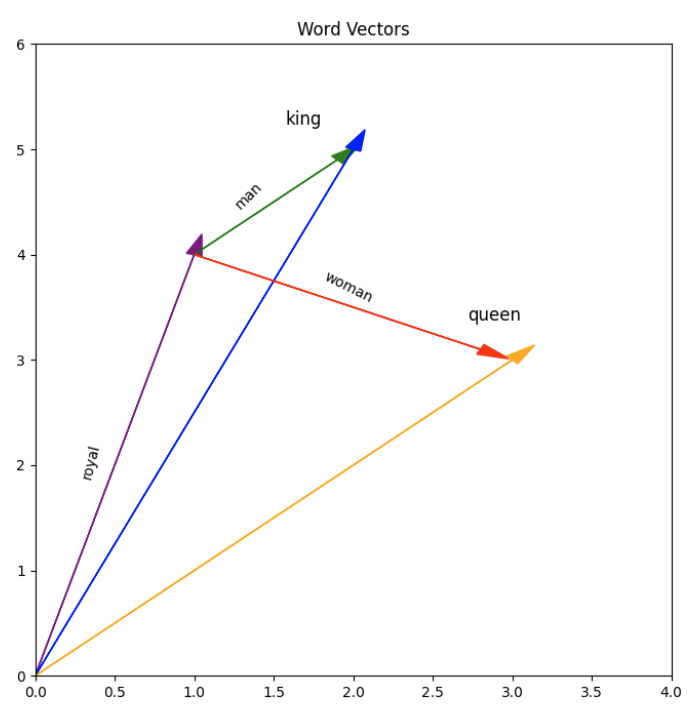

Ben Vierck, Word Vector Illustration, CC0 1.0

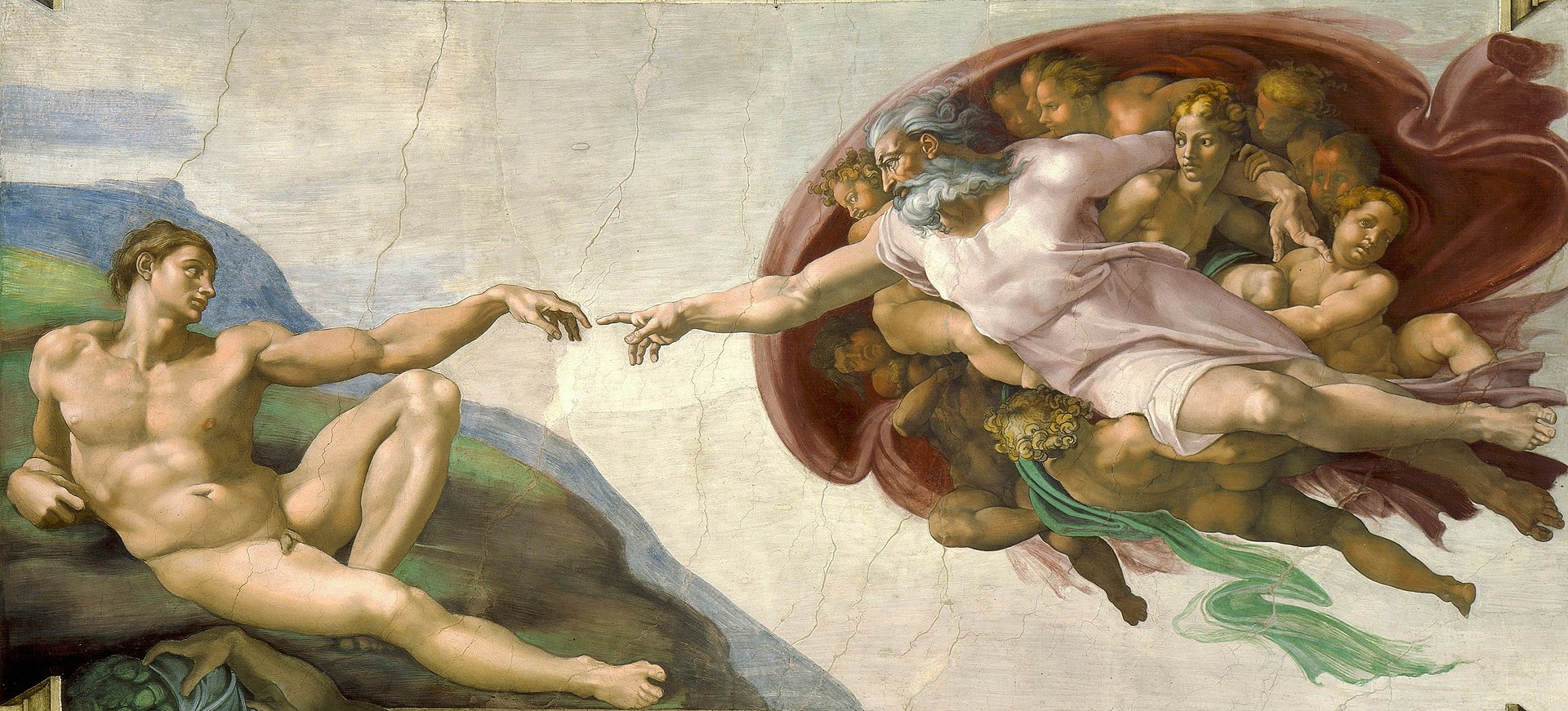

Ben Vierck, Word Vector Illustration, CC0 1.0 By Michelangelo, Public Domain, https://commons.wikimedia.org/w/index.php?curid=9097336

By Michelangelo, Public Domain, https://commons.wikimedia.org/w/index.php?curid=9097336